Understand Video Skills

When you build a video skill, users can control their entire video experience by using voice. Customers can control video devices and consume video content without invoking a specific skill. For example, the customer can ask Alexa the following without specifying a video device or content provider:

-

User: Alexa, play Star Wars: Return of the Jedi.

-

User: Alexa, turn on my video device.

-

User: Alexa, lower the volume on my video device.

What is a video skill?

Video skills focus on the video experience. The video interfaces expose the following functionality:

- Search and play content

- Playback controls, such as pause and rewind

- Adjust the volume

- Turn a video device on or off

- Change the video device input

- Launch an app or GUI shortcut

- Record video content

- Channel navigation

- Video catalog ingestion

A video skills offers advantages over a custom skill for video. The video interfaces use the pre-built voice interaction model. This model gives you a set of predefined utterances that users say to control your device. Through these interfaces, Alexa is aware of the video devices and services that a user has or subscribes to, and enables the user to control experiences across these devices and services. In contrast, custom skills require users to invoke a skill by name. These custom skills aren't aware of the user's broader set of devices and services.

For example, a video provider produces custom content, a new television series called "The Adventures of Sam." If that provider uses the Video API to create an Alexa skill, that content can be ingested into the Alexa content catalog. Alexa then understands the provider for the content. When a customer says, "Alexa, play The Adventures of Sam," Alexa identifies the content provider, and walks the customer through the process of enabling the appropriate skill. Without the Video API, a customer would have to remember that "The Adventures of Sam" came from a specific provider, make sure they had enabled that provider's custom skill, and then ask the skill for the content.

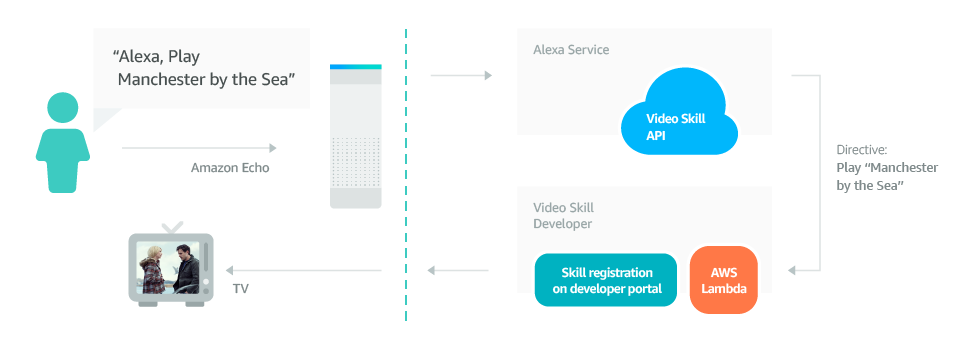

Understand how a video skill works

An Alexa video ecosystem contains the following:

- Customer

- The person interacting with the Alexa device and the subscriber to your video service.

- Video Skill API

- Alexa understands the pre-built voice commands and converts them to directives (JSON messages) that are sent to video skills.

- AWS Lambda

- A compute service offered by Amazon Web Services (AWS) that hosts the video skill code.

- Video Skill

- Code and configuration that interpret directives and send messages to a video app or device to complete the customer request. The term "video skill" is used more generally to refer to all the components and services involved in integrating the Video API.

What kinds of devices does the Video API support?

You can incorporate Alexa video skills for three types of devices:

-

Living room entertainment devices: Living room entertainment devices refer to set-top boxes, gaming consoles, and smart TVs. If you're a device manufacturer building these types of devices, you can build video skills directly into your device or your device cloud app to allow customers to launch apps, navigate channels, and more. Video Skill APIs use the public IMDb catalog for live TV and video-on-demand content. To implement a video skill, you build an AWS Lambda function to support play, search, navigate, and record functionality. Your skill handles cloud-to-device communication. Your device software sends state information to your skill. For details, see Steps to Build a Video Skill for Living Room Entertainment Devices.

-

Fire TV apps on Fire TV: Video skills for Fire TV apps allow customers to use natural language commands to search for your app's content, launch your app, control media playback, change the channel, and more. For example, your video skill can enable customers to say phrases like "Play Bosch from [app name]" or "Play Bosch," and your app plays the media. To implement a video skill for your Fire TV app, you primarily implement the Alexa Video API. However, the integration also includes Amazon Device Messaging (ADM), AWS Lambda, and more. Incorporating a video skill for your Fire TV app gives customers the richest voice experience with your content, driving up the levels of engagement and discovery. To get started, see Video Skills for Fire TV Apps Overview.

-

Multimodal devices: Multimodal devices, such as the Echo Show, refer to Alexa-enabled devices that offer both audio and visual interfaces. Multimodal devices use an "app-less" framework that leverages your same Amazon catalog integration along with your existing HTML5 web player for playback. Multimodal devices also provide templates for rendering browse and search experiences on the device. As with the Fire TV app implementation, to implement video skills on multimodal devices, you develop a Lambda function to respond to requests from Alexa and pass the responses back to the web player. Your responses contain the content URIs to play the requested media. To get started, see Video Skills for Multimodal Devices Overview.

Who Can Develop Video Skills for Alexa?

Anyone can develop a video skill. Developing video skills doesn't require you to parse voice utterances, as this work is done by Amazon's Alexa service. The Alexa service knows how to interpret the user's speech and what messages to send to your video skills.

Generally, video skill development falls into two categories:

- Device manufacturers who want to enable voice interactions with their devices. Device manufacturers implement video skills on living room entertainment devices.

- Video service providers who want to enable voice interactions with their video services. Video service providers implement video skills for Fire TV apps and multimodal devices.

Prerequisites to Video Skill Development

To develop a video skill you must have the following:

- An Amazon developer account.

- A connected device with a cloud API or cloud-enabled video service.

- An Alexa device such as Amazon Echo.

- An AWS account. You host your skill code as an AWS Lambda function

- Knowledge of Java, Node.js, Python, or C#. You can write Lambda functions in any of these languages.

- An understanding of OAuth 2.0.

Supported locales

Review the video APIs to find the directives to use in your skill to control your video endpoints. For details about the supported locales and languages for video APIs, see List of Alexa Interfaces and Supported Languages.

Documentation guide

| Topic | Description |

|---|---|

| Video Skills for Fire TV Apps | Step-by-step guide to create a video skill for Fire TV apps. |

| Video Skills for Multimodal Devices | Step-by-step guide to create a video skill for multimodal devices such as Echo Show. |

| Video Skills for Living Room Entertainment Devices | Step-by-step guide to create a video skill for living room entertainment devices such as set-top boxes, gaming consoles, and smart TVs. |

| Video Skill APIs | The video interfaces to implement in your Alexa video skill. |

| Smart Home Skill APIs | Interfaces available to smart home and video skills. |

| Alexa Interface Message and Property Reference | Describes the structure of messages and parameters to and from Alexa. |

| State Reporting for Video Skills | Describes when and how to send change reports to Alexa when the device state changes. |

| Device Discovery | Describes Alexa device discovery interface and discovery format. |

| Content Catalog Ingestion | Describes how to provide your media content catalog to Alexa. Catalog ingestion improves speech recognition accuracy for media entities, such as channels, TV shows, and movies. |

| Lineup and MSO Information | Describes how live TV providers can specify an MSO name and lineup that's specific to each user or zip code. |

Last updated: Oct 30, 2025